Back to all work

PRODUCT DESIGN • SYSTEMS THINKING • AI STRATEGY

Process Lab

An AI-powered workflow diagnostic engine that reconstructs processes, classifies friction, quantifies inefficiency, and designs small, testable AI interventions. The goal was not to “add AI,” but to apply constraint theory and systems thinking to intelligently redesign workflows.

*This is a fully-functional working prototype that I developed using Claude Code, Google AI Studio, and Google Stitch as a side project at the Department of State. I was responsible for designing the friction taxonomy, building the friction scoring index, designing the AI opportunity detection logic, creating the experiment design framework, structuring executive-ready outputs, and defining the UI and interaction model.

ROLE

Product Designer, Service Designer, Systems Architect

Teams know the process is broken. They don't know how to quantify it.

Teams describe process pain in vague terms: "approvals take too long," "there's too much back and forth," "leadership has to sign off on everything." But vague descriptions lead to vague solutions. What was missing in our analyses was a structured way to decompose a workflow, classify what's actually causing the friction, measure how severe it is, and then test targeted interventions rather than overhauling everything at once.

Most AI conversations start with tools. Someone hears about a new capability and asks "where can we apply this?" That's backwards. Process Lab starts with structure. It helps teams understand the friction first, then determine whether AI is the right lever to pull.

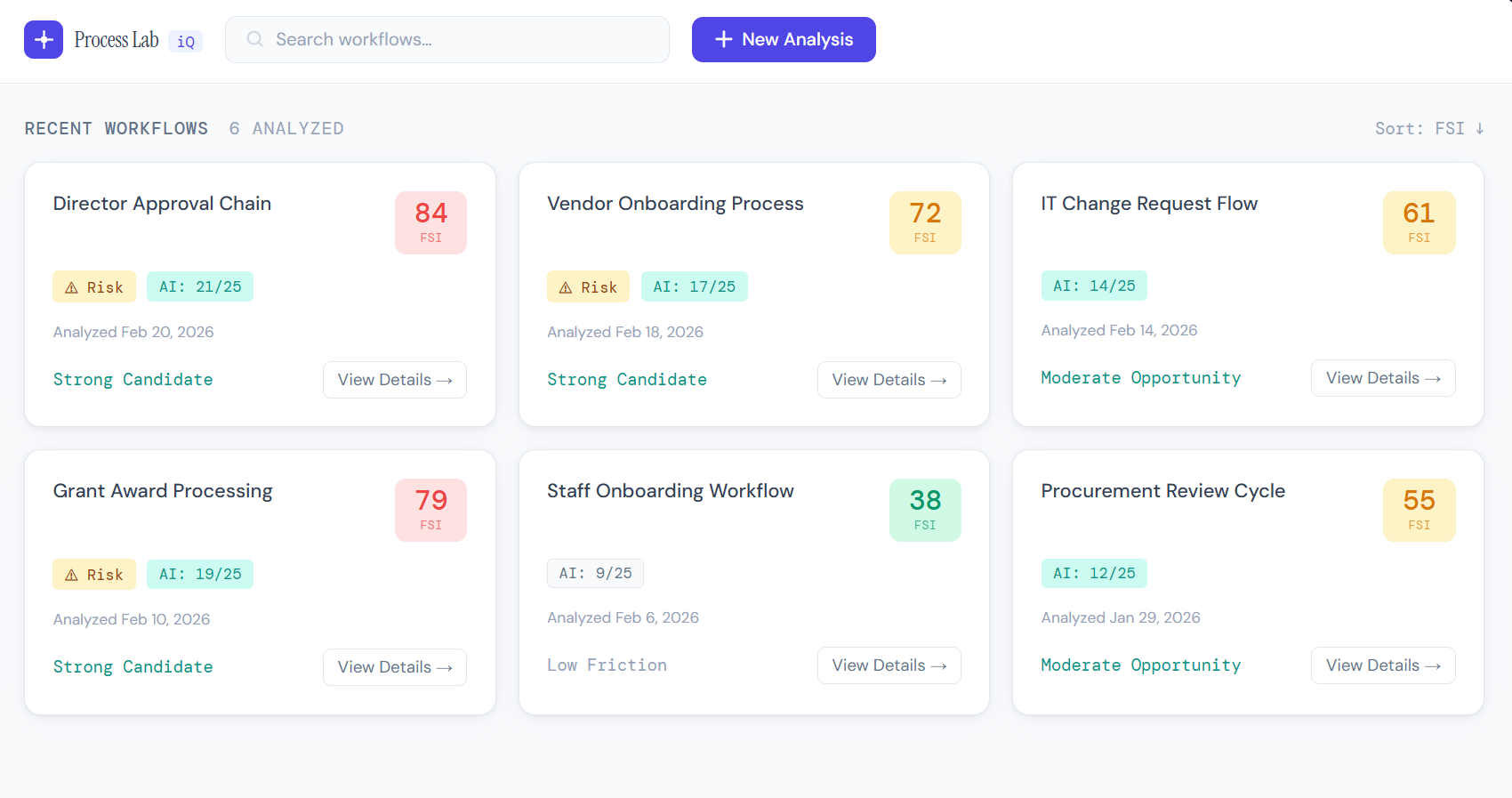

Process Lab main home page.

CHALLENGE

Five-Stage Diagnostic Pipeline

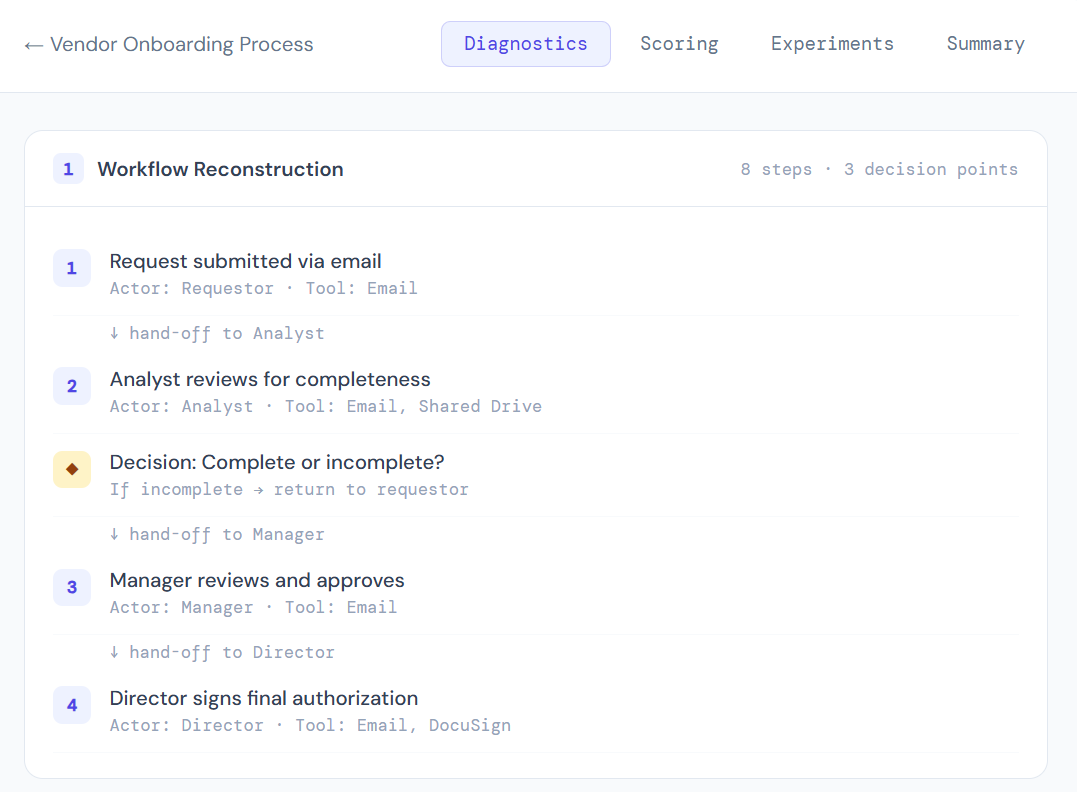

Process Lab operates as a structured pipeline. Each stage builds on the previous one, moving from raw process description to actionable, testable experiments. The system outputs a reconstructed workflow map, a friction map with tagged constraints, quantified scoring, an AI Opportunity Index, three micro-experiments with deployment plans, and an executive-ready summary.

2

Friction Classification

Tag each step with friction type and severity

HOW IT WORKS

1

Workflow Reconstruction

Rebuild the process from user input into a structured map

3

Constraint Identification

Locate the primary bottleneck limiting throughput

4

AI Opportunity Mapping

Evaluate where AI patterns apply structurally

5

Experiment Design

Generate testable, reversible micro-interventions

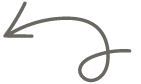

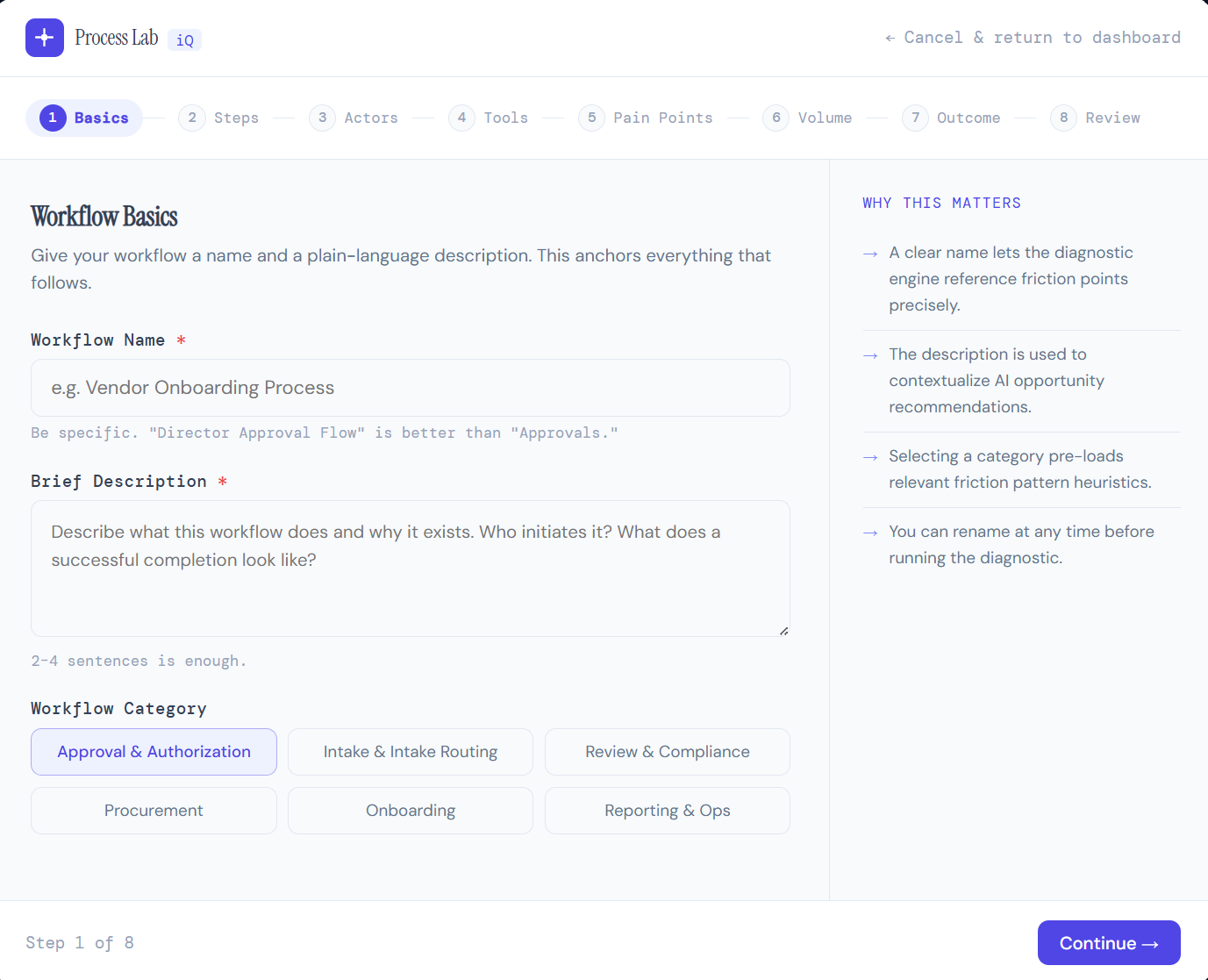

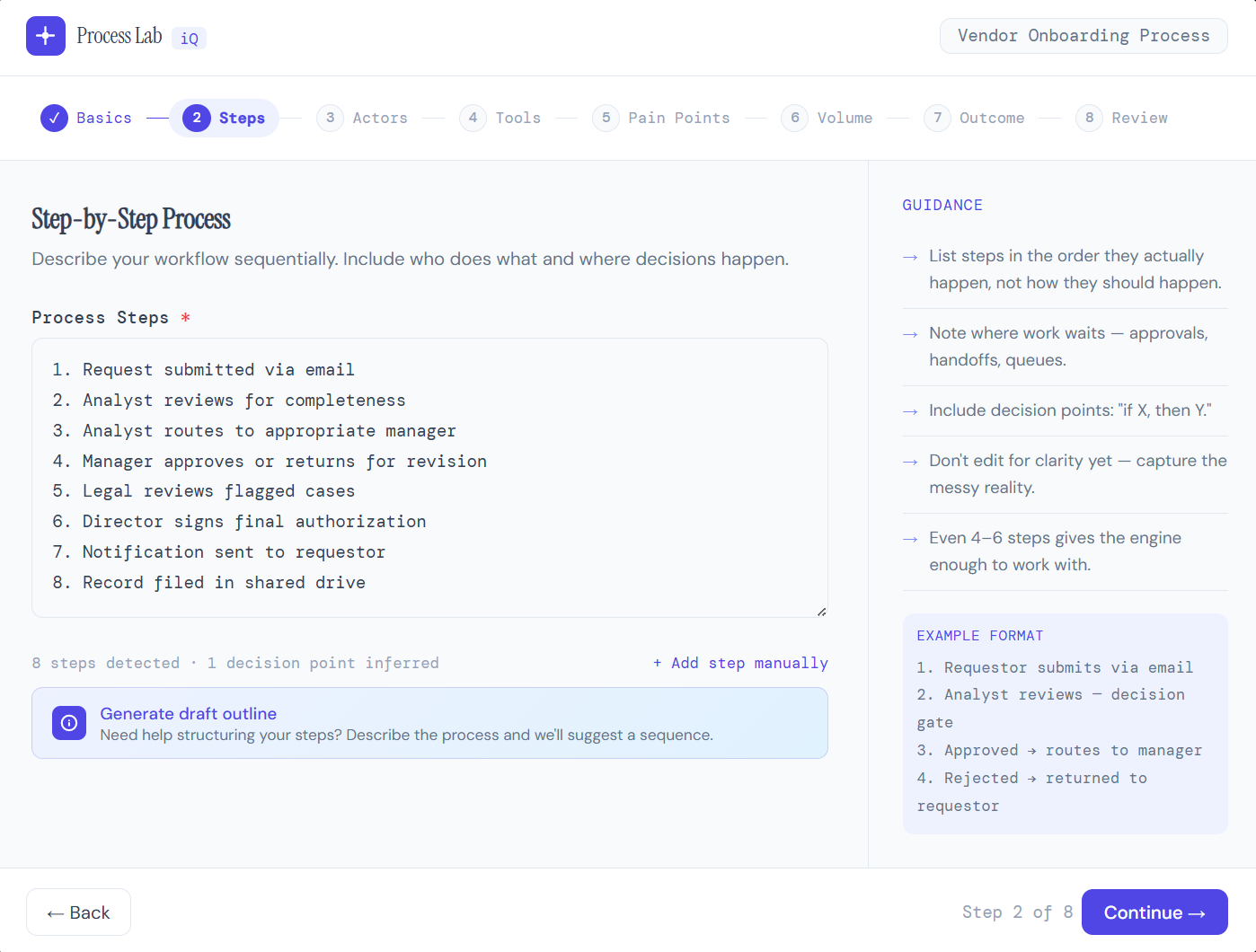

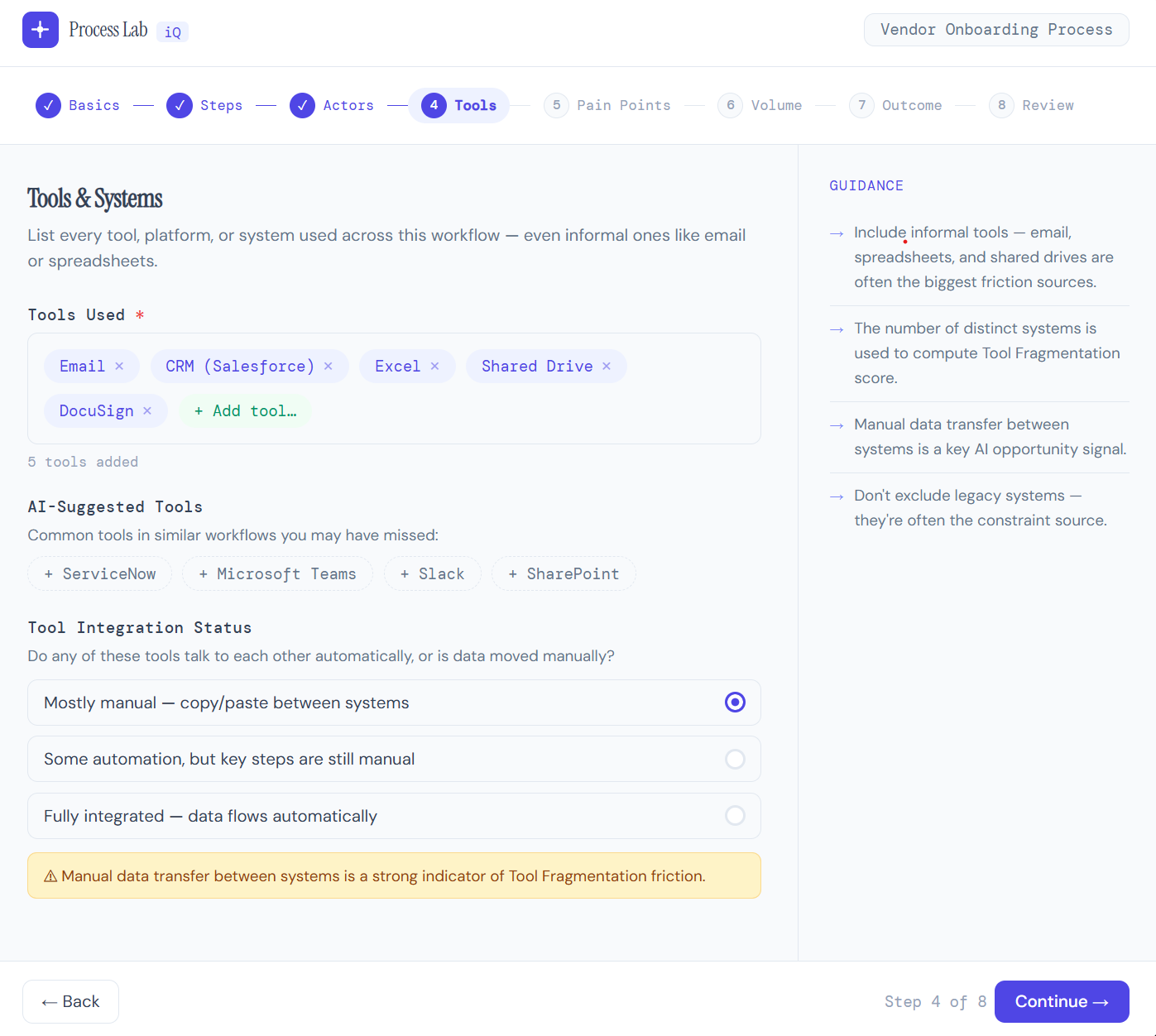

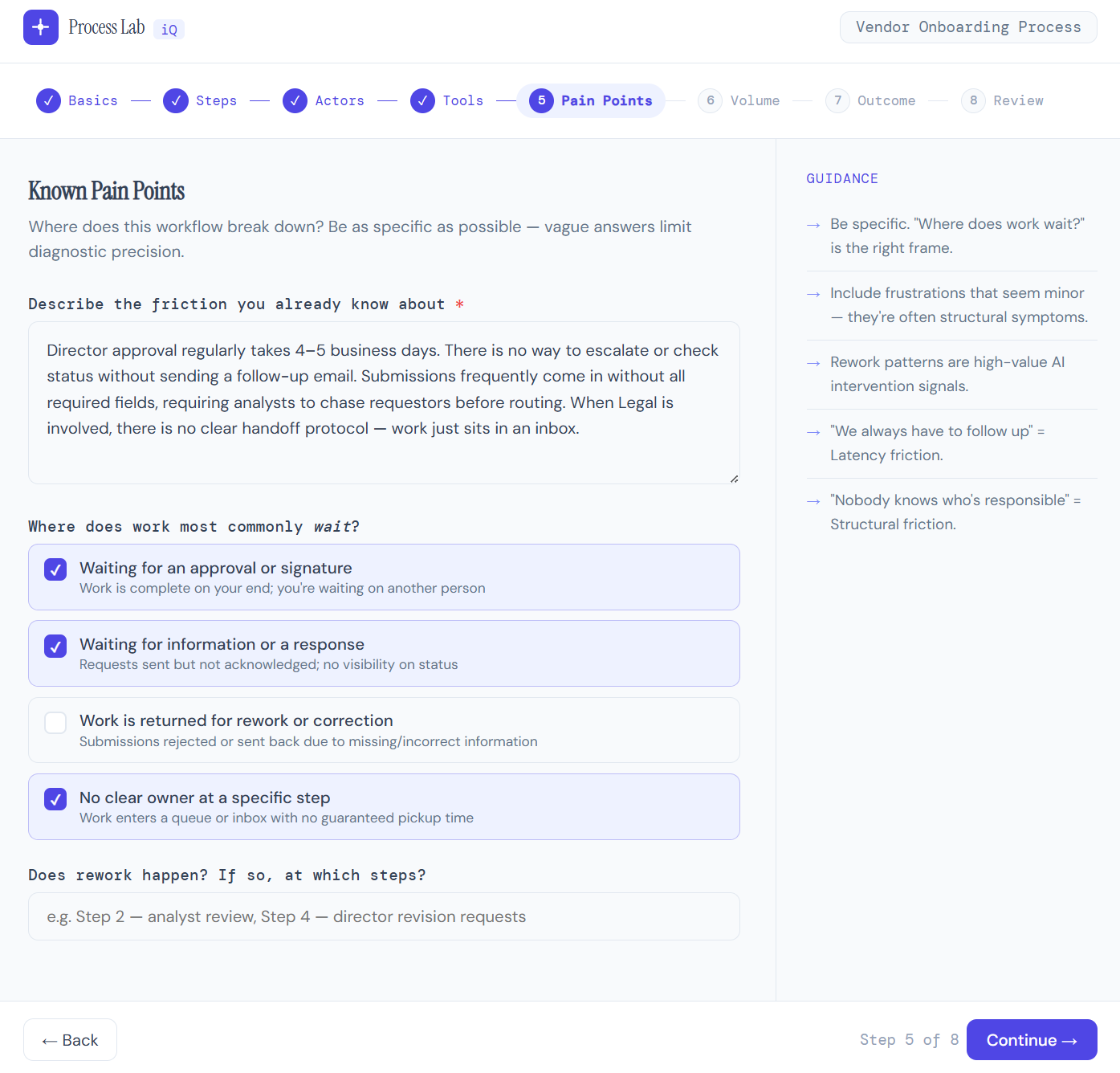

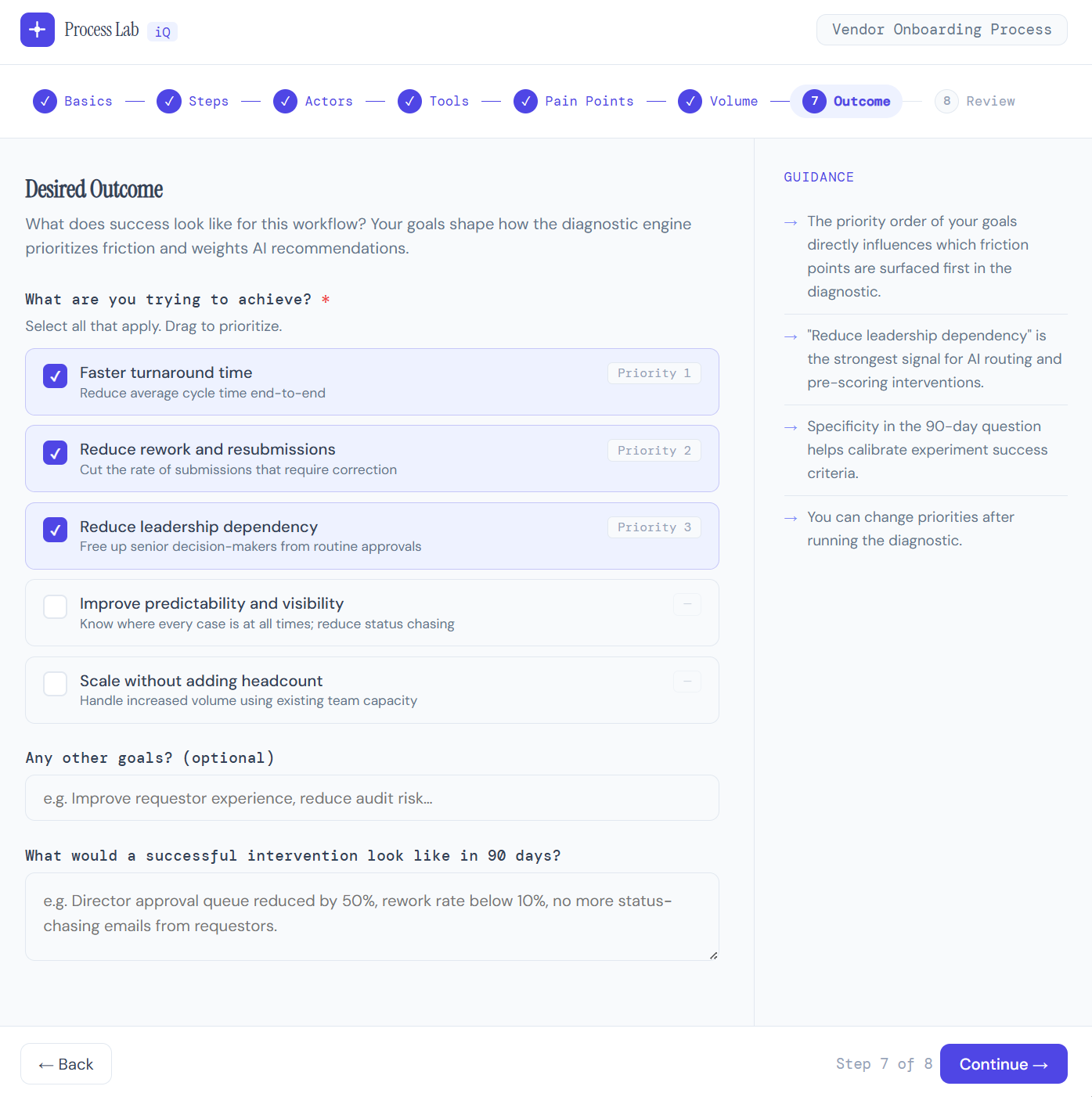

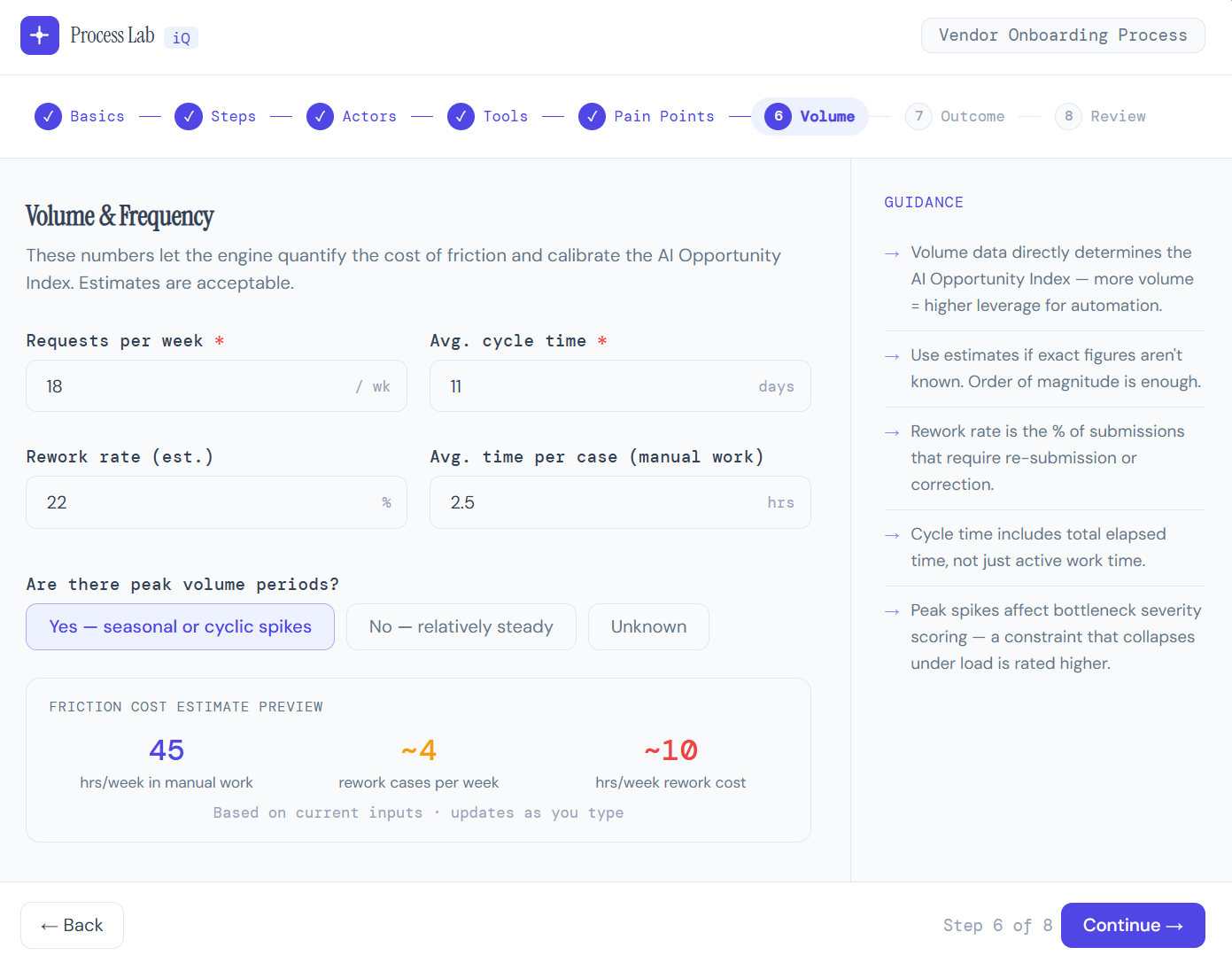

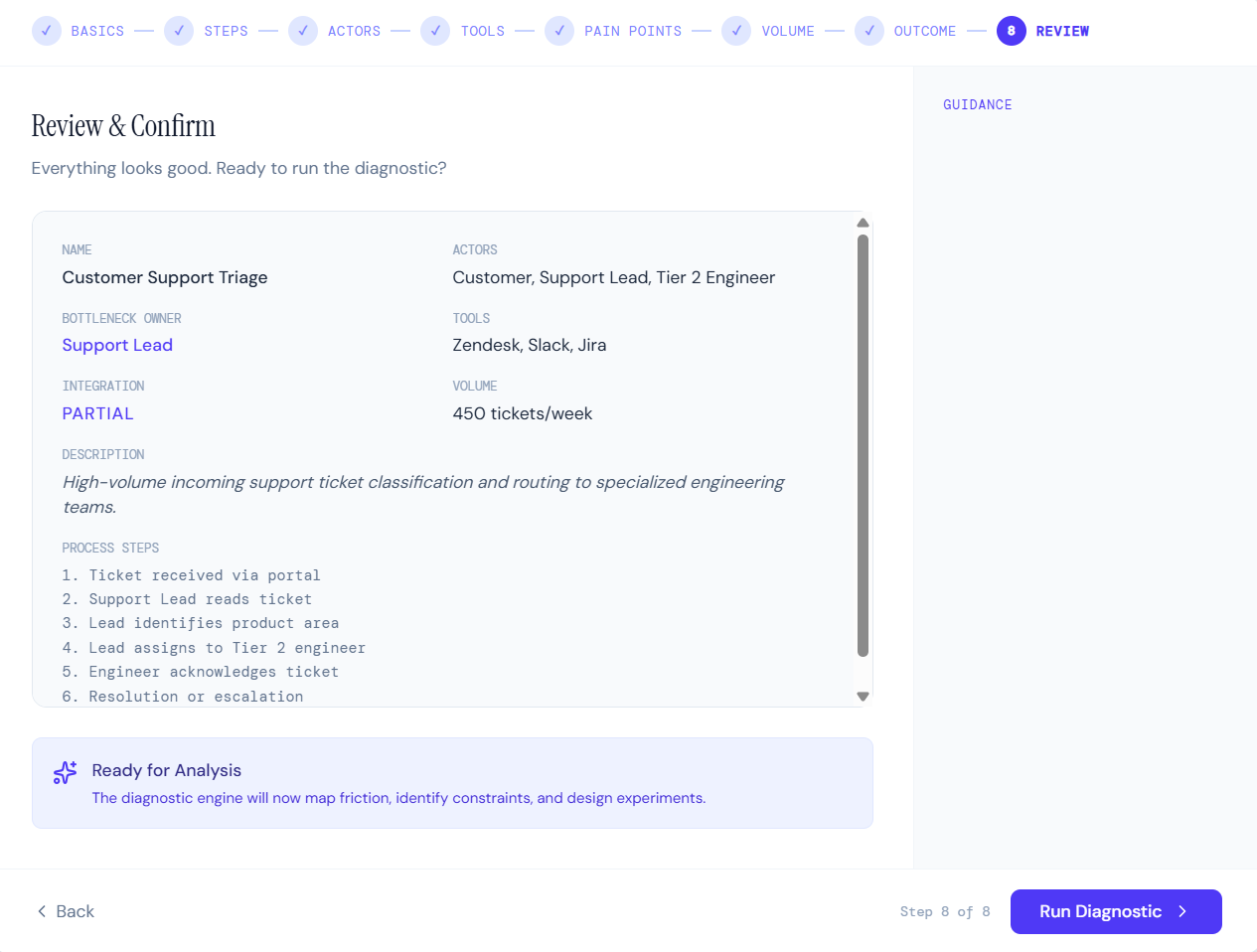

A seven-step intake wizard prompts the user to describe their workflow in plain language. It guides them through identifying critical components of the process (steps, actors, tools, pain points, volume, and desired outcome) and provides guidance and examples. The system reconstructs the process into discrete, analyzable steps.

WORKFLOW INPUT & RECONSTRUCTION

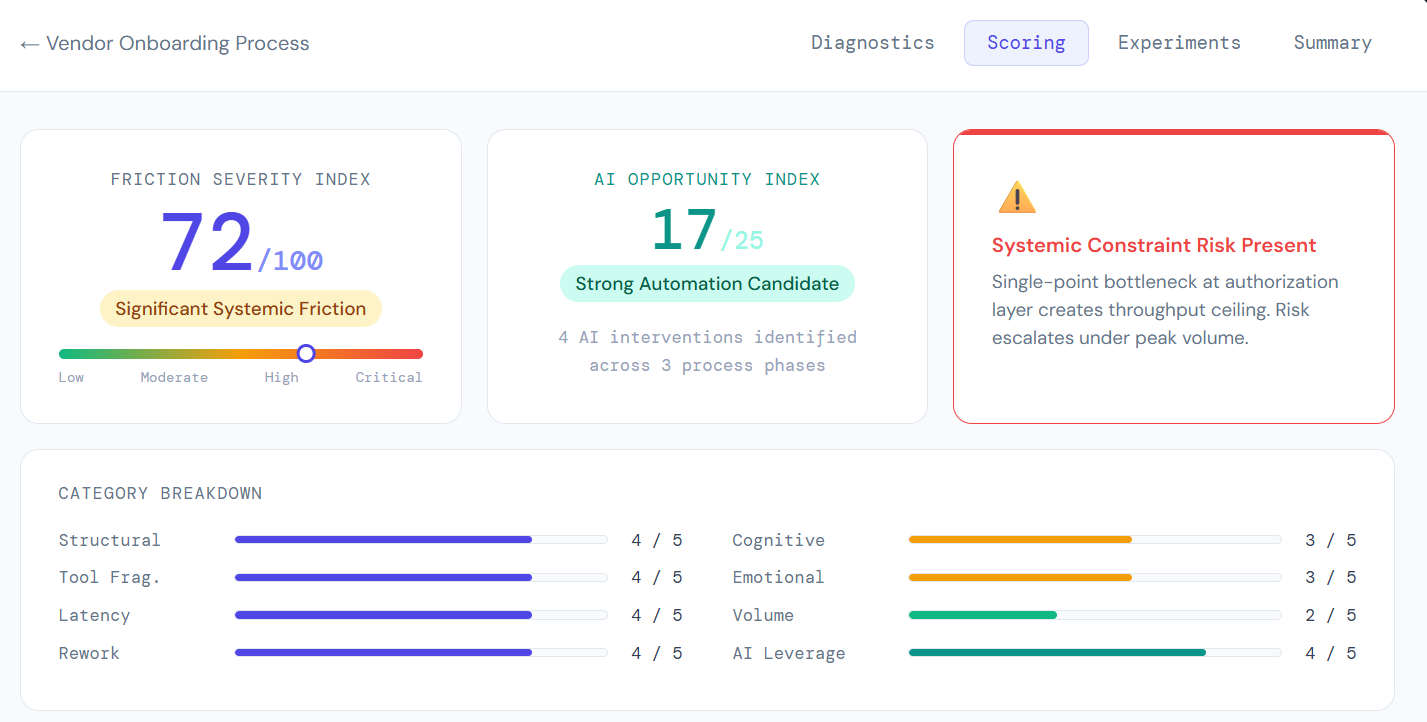

Weighted Friction Scoring Index scorecard with the breakdown of friction categories below. The AI Opportunity Index surfaces at the top alongside the overall friction score.

The Friction Scoring Index transforms qualitative friction into a weighted, repeatable numeric model. Every category is scored 0–5 and combined into an overall score and AI opportunity index.

FRICTION SCORING INDEX

Seven Categories of Friction

Structural | Approval Stacking, ownership gaps, unclear handoffs

Cognitive | Ambiguous criteria, judgment overload, decision fatigue

Tool | System switching, data duplication, format mismatches

Latency | Queue buildup, waiting on dependencies, batch delays

Volume | Capacity mismatches, unpredictable demand spikes

Emotional | Escalation dependency, stakeholder anxiety, risk aversion

Rework | Return loops, incomplete submissions, repeated reviews

To make the diagnostic measurable and repeatable, I built a weighted scoring model. Each friction category is scored 0 to 5 and weighted by its typical impact on throughput. The output is three numbers: an Overall Friction Score (0 to 100), an AI Opportunity Index (0 to 25), and a Risk Indicator Flag. This transforms the experience from qualitative advice to quantitative decision support.

Plain language input reconstructed into discrete process steps.

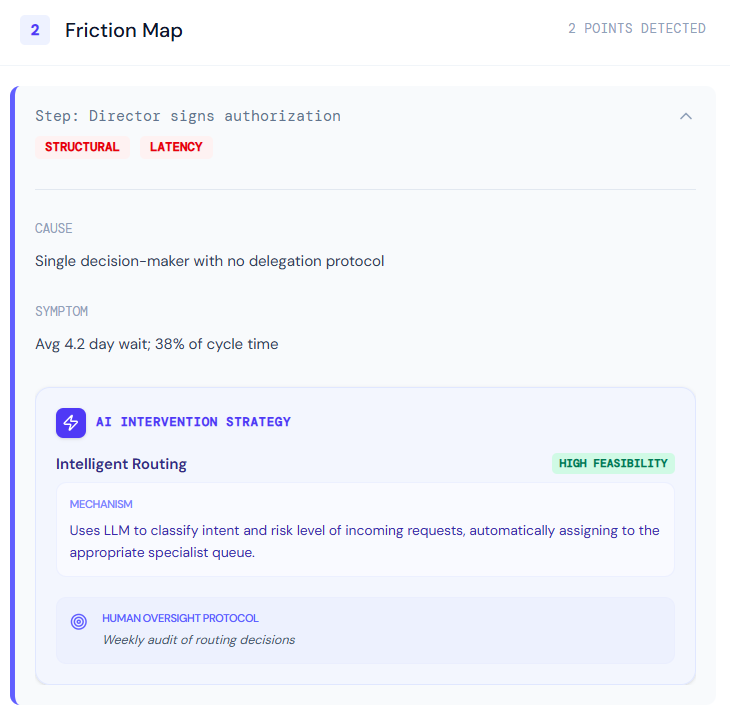

Friction points are identified from the process steps.

AI OPPORTUNITY DETECTION

Structurally grounded, not trend-driven

Rather than generically recommending automation, the system evaluates whether each workflow step is pattern-based, repetitive, rules-driven, text-heavy, routing-dependent, or escalation-triggered. If a step meets those criteria, the system flags the appropriate AI pattern: summarization, triage, decision assist, intelligent routing, predictive scoring, or data extraction.

This matters because it prevents the common mistake of applying AI where it looks impressive rather than where it actually reduces a constraint. Every recommendation is tied to a specific friction point in the workflow, not to a general capability.

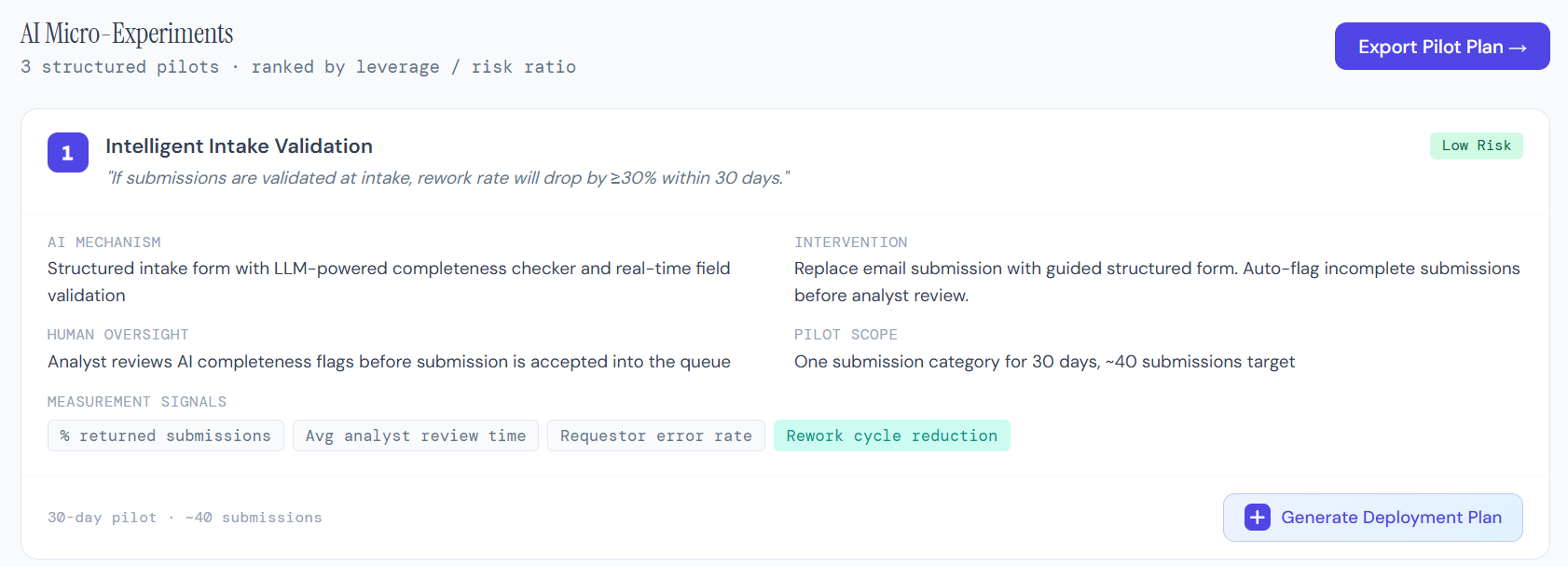

Instead of recommending a full overhaul, the system generates exactly three AI-enabled experiments. Each one includes a hypothesis, the AI mechanism being tested, the structural intervention it addresses, a human oversight plan, lead and lag metrics, and a risk level. The constraints are deliberate: every experiment must be reversible, measurable, and designed with responsible AI principles.

Example experiment card. Each card enforces a hypothesis, AI mechanism, intervention, oversight component, and scope. The system can also use AI to generate a detailed deployment plan.

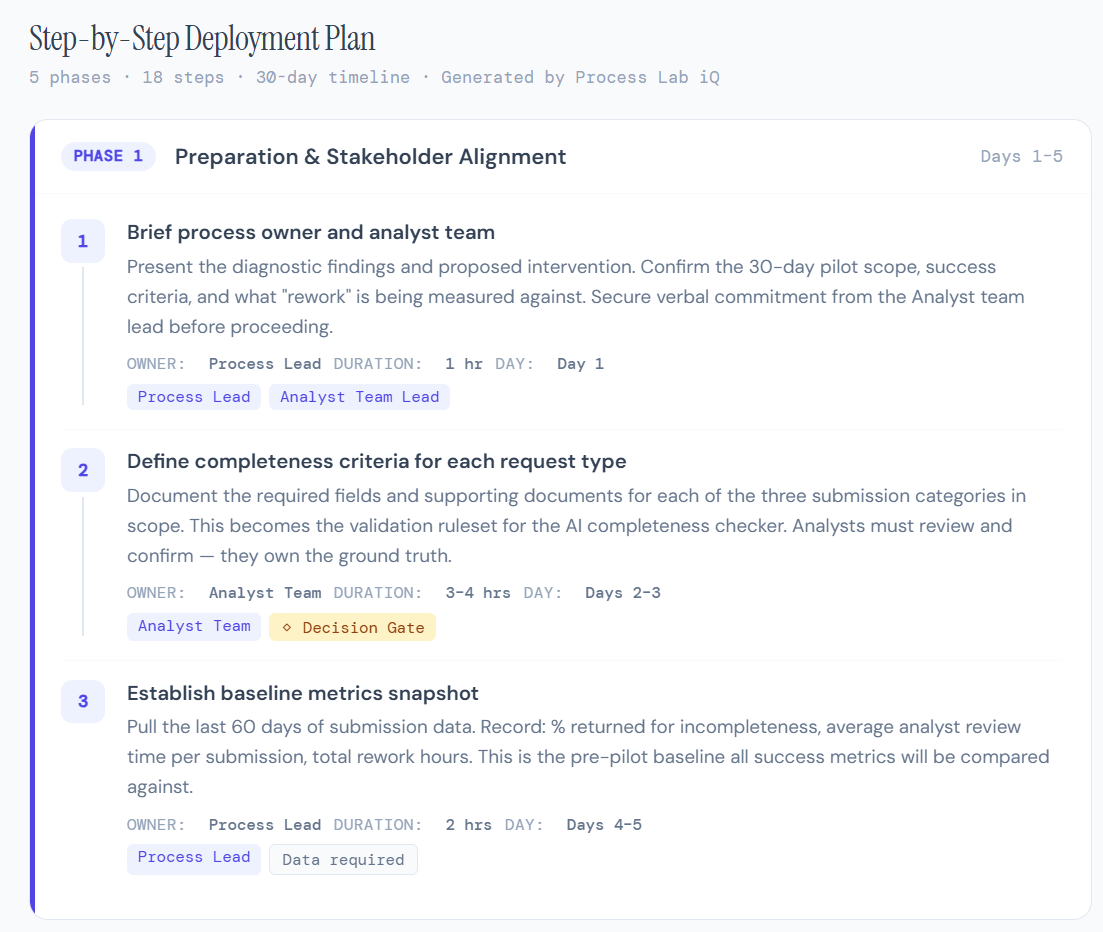

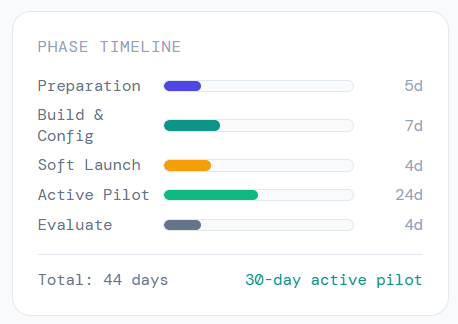

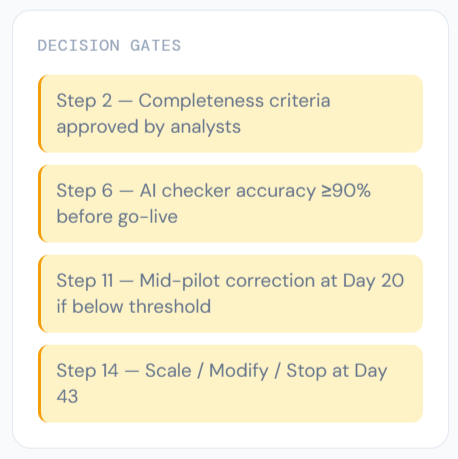

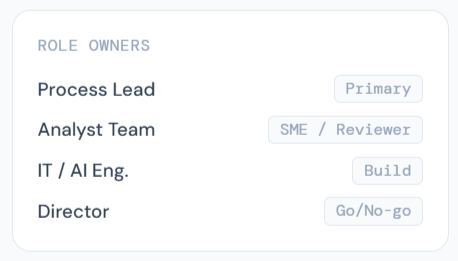

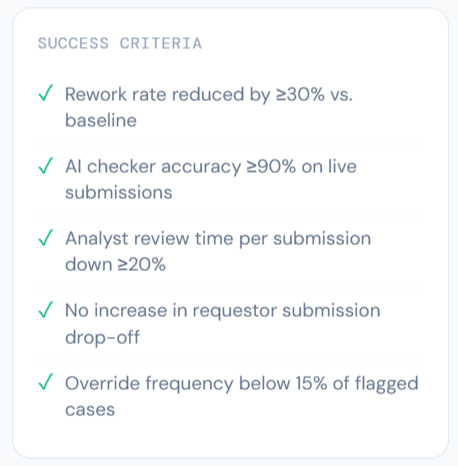

Phase 1 of an example deployment plan along with auto-generated timelines, stakeholders, decision gates, and success criteria.

AI MICRO-EXPERIMENT DESIGN

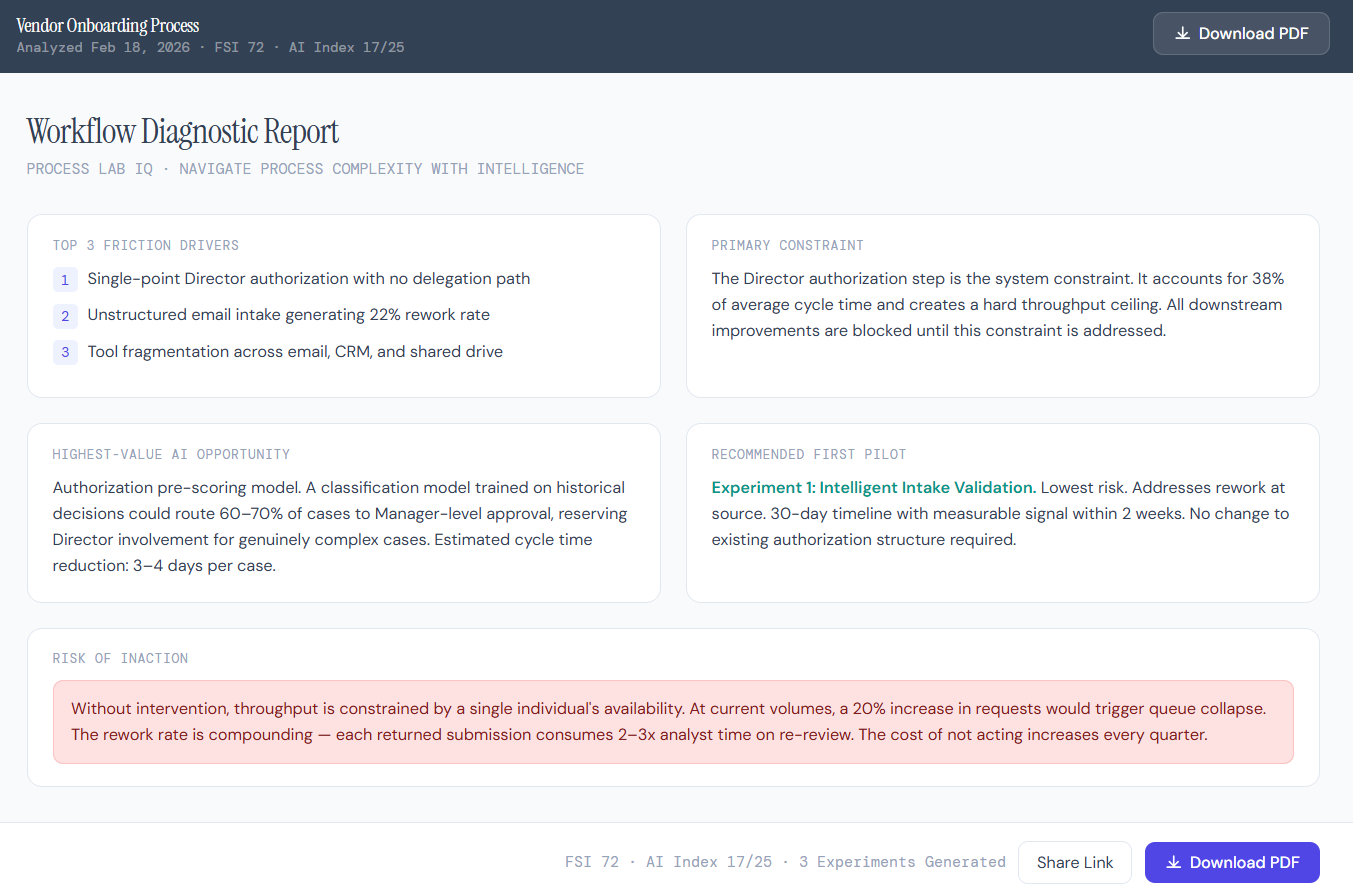

A one-page synthesized output for decision-makers. Leads with the reframed problem, not raw data.

Executive output leads with reframed problem statement. PDF export collapses the full analysis into a decision-ready brief.

EXECUTIVE SUMMARY OUTPUT

Most friction turns out to be structural, not behavioral.

Often processes are slow not because of people, but because the system they're working in has too many handoffs, unclear ownership, or decisions stacked on a single person. Fixing the structure changes the behavior without requiring anyone to "do better."

AI works best at bottlenecks, not everywhere. Spreading AI across a workflow dilutes its impact and increases complexity. Concentrating it at the constraint point, the place where throughput is actually limited, creates the most value with the least disruption.

And perhaps most importantly: small experiments beat transformation mandates. Three reversible micro-tests will teach an organization more about AI's actual value than a twelve-month rollout plan ever could.

As organizations adopt AI, the question shouldn’t be, “Where can we use AI?”

A better question might be, “Where is the friction?”

WHAT I LEARNED

Back to all work